03.24.26 By Bridgenext Think Tank

Databricks has become one of the most consequential platform decisions in enterprise data strategy. Its Unified Lakehouse model combining data engineering, analytics, and machine learning in a single environment offers compelling capabilities for organizations managing large-scale data workloads. Adoption has grown accordingly, with Databricks now serving thousands of enterprise customers globally, including more than 60% of the Fortune 500.

Yet in our work with enterprise data teams across financial services, healthcare, and technology sectors, a consistent pattern emerges; the distance between what the platform is capable of and what most organizations are using it for is significant. Licenses are active, clusters are running, and basic pipelines are in production, but the deeper value layers of the platform remain underutilized.

This blog provides a practitioner’s perspective on where that gap tends to form, why it persists, and what a more deliberate approach to platform adoption looks like in practice.

Enterprise investment in data and AI platforms has accelerated sharply over the past several years, driven by the convergence of cloud infrastructure maturity, AI/ML ambition, and the increasing strategic weight of data-driven decision-making. Databricks sits at the center of that investment for many organizations.

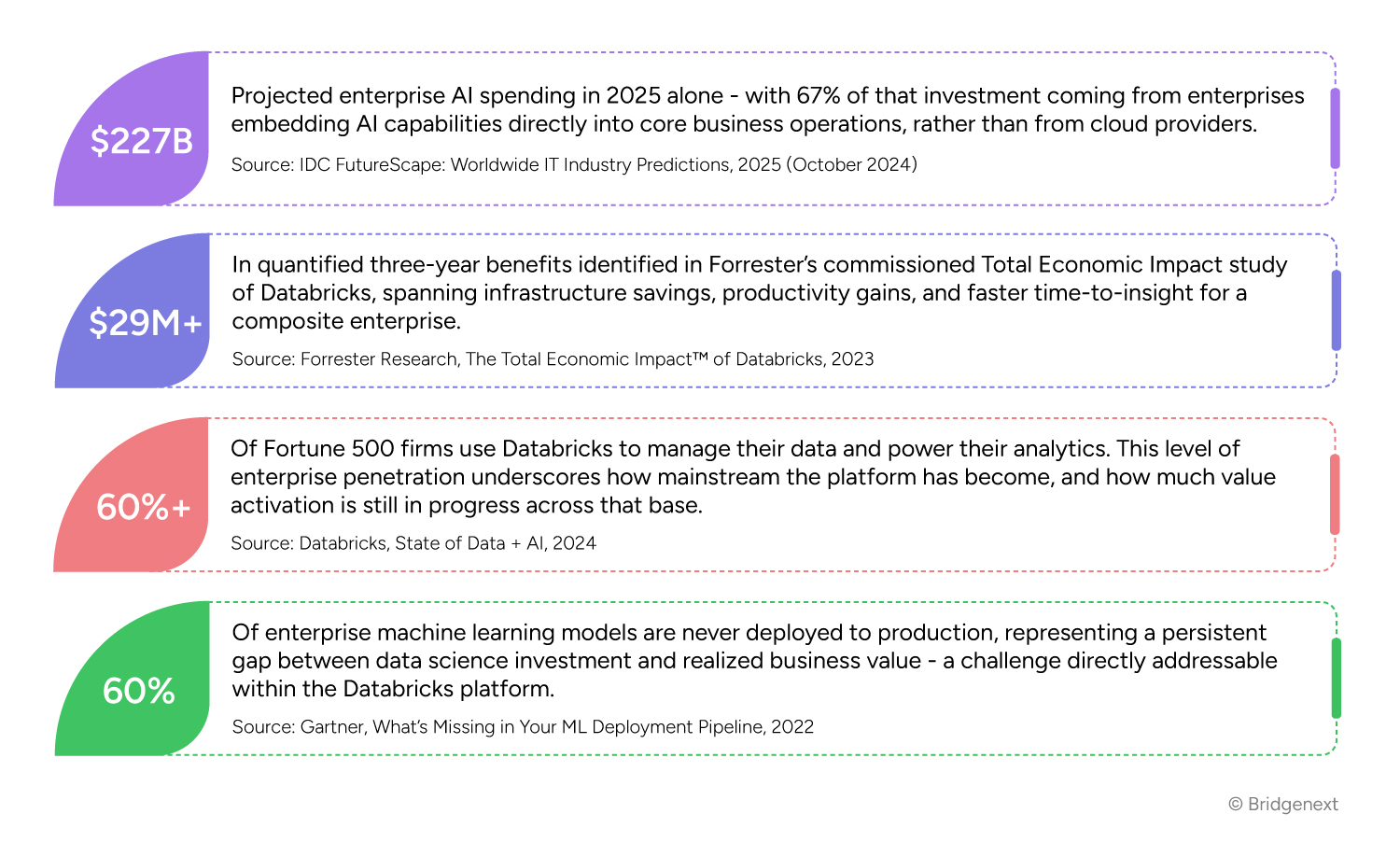

A few data points help frame the scale of what is at stake:

These figures point to a consistent theme; investment in data platforms is not the limiting factor. The gap between spend and return tends to form in how platforms are adopted, governed, and operationalized over time.

Based on our work across enterprise Databricks deployments, the following patterns appear repeatedly. None of them reflect a failure of the technology. Each represents an opportunity to capture value that is within reach.

One of the most common adoption patterns we observe is organizations migrating to Databricks and recreating the architecture they had before with batch pipelines, scheduled jobs, and a query layer that resembles a traditional data warehouse. This is understandable; it reduces short-term risk and leverages existing team skills.

The challenge is that this approach captures only a portion of what a unified lakehouse architecture makes possible. Databricks is designed to support streaming and batch workloads in a single environment, enable real-time analytics alongside historical reporting, and serve as the foundation for machine learning workflows. When it is used primarily as a warehouse replacement, the platform investment is not misplaced, it is simply underweighted against the platform’s full capability set.

The shift from using Databricks as a data warehouse replacement to using it as a unified intelligence platform is where the value curve tends to inflect meaningfully.

Delta Lake is the storage layer that underpins Databricks’ lakehouse architecture, and it includes capabilities that go beyond what many teams currently use Lakeflow Declarative Pipelines for including declarative, low-maintenance pipeline for development; automatic liquid clustering for automated data layout optimization and change data feed for efficient incremental processing patterns.

In practice, most teams default to conventional Spark pipelines that do not leverage these features. The result is pipelines which require more manual maintenance, perform less efficiently at scale, and accumulate technical debt as data volumes grow. Closing this gap typically requires a combination of architectural review and targeted upskilling, rather than significant re-platforming.

“Unity Catalog”, Databricks’ unified governance layer, provides centralized access control, data lineage tracking, and audit capabilities across the entire lakehouse. It is foundational for organizations with compliance obligations, cross-functional data sharing requirements, or ambitions to scale AI use cases responsibly.

Despite its availability and the fact that Databricks now makes Unity Catalog the default for all new account provisioning, a significant number of existing enterprise deployments have either not activated it fully or have implemented it without a coherent governance strategy behind it. The downstream effects are familiar – inconsistent data access, difficult-to-trace lineage, and reduced trust in data, particularly for AI applications across the organization.

Organizations that implement structured governance early, report meaningfully shorter timelines for data product development and lower friction in cross-team data sharing.

Databricks has invested substantially in its end-to-end machine learning suite, now unified under the Mosaic AI brand “MLflow” for experiment tracking and model lifecycle management. Offerings include ‘Databricks Feature Engineering’ for consistent feature development and reuse, and ‘Mosaic AI Model Serving’ for scalable production deployment. These components are designed to close the loop between data and deployed AI within a single governed environment.

In many organizations, however, data science workflows remain partially or fully decoupled from the lakehouse. Data is exported to separate environments for model training, models are deployed through external infrastructure, and monitoring is handled ad hoc. This fragmentation increases infrastructure complexity, makes model governance harder, and is a contributing factor to the persistently high rate of models that never reach production.

Organizations that consolidate ML workflows within the Databricks platform tend to see improvements in model deployment frequency and more consistent feature quality though outcomes vary meaningfully by organizational context and existing process maturity.

Databricks’ consumption-based pricing model aligns cost with usage which is a genuine advantage when workloads are well-understood and clusters are appropriately configured. Without deliberate cost governance, however, spend can grow faster than the value being generated.

Common patterns include all-purpose clusters running without idle timeout policies, development and production workloads sharing infrastructure, and autoscaling configurations that are not calibrated to actual workload profiles. These are addressable issues, but they require visibility into cluster utilization, workload classification, and a governance model that includes cost accountability at the team level.

In our experience, organizations that introduce FinOps discipline alongside platform adoption rather than as a reactive response to an unexpected invoice tend to see more sustainable cost trajectories as data volumes and user counts grow.

These patterns are worth addressing not only for their individual impact, but because they tend to reinforce one another over time. For example:

The longer these gaps persist, the more organizational inertia builds around the workarounds that fill them, making change progressively more difficult. This is not a reason for urgency for its own sake, but it is a reason for intentionality. A structured assessment of where your deployment stands today is a practical and low-risk starting point for most organizations.

Platform maturity is not achieved at go-live. It is built incrementally through deliberate architectural decisions, governed adoption, and a clear view of where the next layer of value sits.

Bridgenext partners with enterprises at every stage of their Databricks journey. We assist organizations in early adoption to establish strong architectural foundations and help those with mature deployments seeking to activate underutilized capabilities. Our approach is grounded in three areas:

We begin by developing a clear picture of where your current deployment stands, architecture patterns in use, governance posture, cost profile, and the gap between current utilization and available capability. The output is a prioritized roadmap that identifies practical next steps sequenced by value and feasibility rather than a comprehensive transformation agenda that is difficult to act on.

Our delivery teams bring experience from real enterprise deployments across industries. Whether the priority is pipeline modernization with Lakeflow Declarative Pipelines, a Unity Catalog implementation, Mosaic AI model operationalization, or streaming architecture design, we work alongside your team to deliver outcomes that are maintainable and scalable without creating dependency on ongoing external support.

Technology outcomes are more durable when the internal team has the knowledge and confidence to own what has been built. We embed enablement into our delivery model through documentation, paired working sessions, and structured knowledge transfer so that capability remains within the organization after the engagement concludes.

Bridgenext offers a structured Databricks Value Assessment designed to identify where your platform can deliver greater returns. Our team brings certified expertise and real-world enterprise experience to every engagement. Visit our Databricks page or connect with us to schedule a conversation.